Permute pytorch12/28/2023

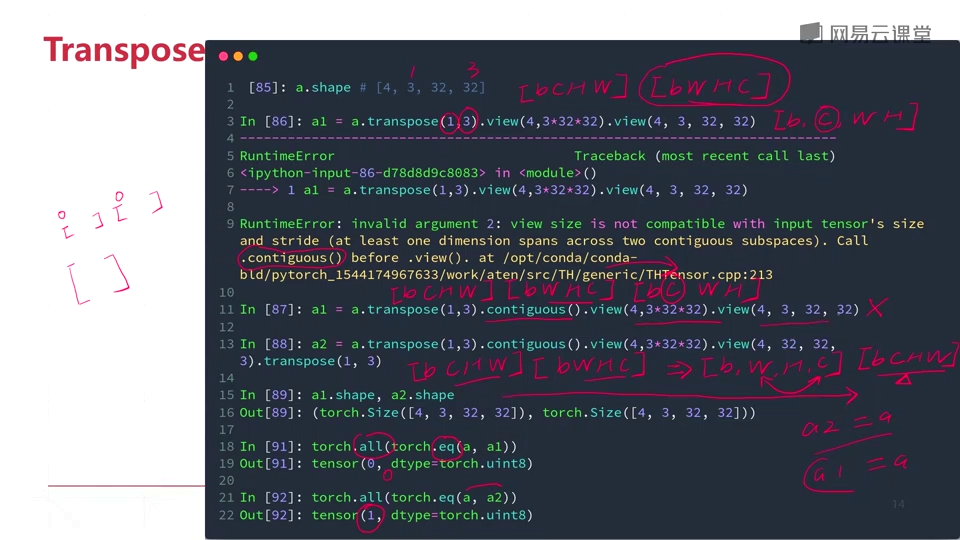

If input is a (b times n times m) (b ×n×m) tensor, mat2 is a (b times m times p) (b ×m ×p) tensor, out will be a (b times n times p. input and mat2 must be 3-D tensors each containing the same number of matrices. encoded layers has size of 32,2 that is you have only 2 dimensions but in permute you are using 3 dimensions. When possible, the returned tensor will be a view of input. From the docs: Returns a tensor with the same data and number of elements as input, but with the specified shape. Performs a batch matrix-matrix product of matrices stored in input and mat2. reshape tries to return a view if possible, otherwise copies to data to a contiguous tensor and returns the view on it. Permute is quite different to view and reshape: # View vs. torch.bmm(input, mat2,, outNone) Tensor. # Reshape works on non-contugous tensors (contiguous() + view) PLL (Permutation if Last Layer): Correctly permuting the last layer corner & edge. Have a look at this example to demonstrate this behavior: x = torch.arange(4*10*2).view(4, 10, 2) PyTorch has been the go-to choice for many researchers since its. See () on when it is possible to return a view.Ī single dimension may be -1, in which case it’s inferred from the remaining dimensions and the number of elements in input. Contiguous inputs and inputs with compatible strides can be reshaped without copying, but you should not depend on the copying vs. Returns a tensor with the same data and number of elements as input, but with the specified shape. Artificial Intelligence & ML Statistical modeling Advanced Visualizations Big Data Supporting. Reshape tries to return a view if possible, otherwise copies to data to a contiguous tensor and returns the view on it. permute and tensor.view in PyTorch Quick Navigation. B: torch.Tensor Torch Tensor of size() C: torch.Tensor Torch Tensor of size() D: torch.Tensor Torch Tensor of size() Returns: Nothing. Args: A: torch.Tensor Torch Tensor of shape (20, 21) consisting of ones. # Helper Functions def checkExercise1 ( A, B, C, D ): """ Helper function for checking Exercise 1. Variable to full text search for authors. Helper function to plot the decision boundaryīonus - 60 years of Machine Learning Research in one Plot Section 3.2: Create a Simple Neural NetworkĬoding Exercise 3.2: Classify some samples

Video 9: Data Augmentation - TransformationsĬoding Exercise 2.6: Load the CIFAR10 dataset as grayscale images Section 2.3 Manipulating Tensors in PytorchĬoding Exercise 2.3: Manipulating TensorsĬoding Exercise 2.4: Just how much faster are GPUs?Ĭoding Exercise 2.5: Display an image from the dataset Section 1: Welcome to Neuromatch Deep learning courseĬoding Exercise 2.2 : Simple tensor operations Moving beyond Labels: Finetuning CNNs on BOLD responseįocus on what matters: inferring low-dimensional dynamics from neural recordings Vision with Lost Glasses: Modelling how the brain deals with noisy input Performance Analysis of DQN Algorithm on the Lunar Lander task NMA Robolympics: Controlling robots using reinforcement learning Something Screwy - image recognition, detection, and classification of screwsĭata Augmentation in image classification models Music classification and generation with spectrograms Knowledge Extraction from a Convolutional Neural Network Tutorial 1: Reinforcement Learning For Gamesīonus Tutorial: Planning with Monte Carlo Tree Searchīonus Tutorial: Deploying Neural Networks on the WebĮxample Model Project: the Train Illusion Reinforcement Learning For Games And Dl Thinking3 (W3D5) Tutorial 1: Un/Self-supervised learning methods Unsupervised And Self Supervised Learning (W3D3) Tutorial 1: Deep Learning Thinking 2: Architectures and Multimodal DL thinking Tutorial 2: Natural Language Processing and LLMs Tutorial 1: Introduction to processing time series Time Series And Natural Language Processing (W3D1) Tutorial 1: Learn how to work with Transformersīonus Tutorial: Understanding Pre-training, Fine-tuning and Robustness of Transformers Tutorial 3: Image, Conditional Diffusion and Beyond Tutorial 1: Variational Autoencoders (VAEs) Tutorial 1: Learn how to use modern convnetsīonus Tutorial: Facial recognition using modern convnets Tutorial 2: Deep Learning Thinking 1: Cost Functions Tutorial 2: Regularization techniques part 2ĭeep Learning: The Basics and Fine Tuning Wrap-up Tutorial 1: Regularization techniques part 1

generator ( torch.Generator, optional) a pseudorandom number generator for sampling. Tutorial 1: Gradient Descent and AutoGrad Returns a random permutation of integers from 0 to n - 1. Prerequisites and preparatory materials for NMA Deep Learning

0 Comments

Leave a Reply.AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed